At the end of April, scientists at IBM announced two critical advances that could eventually lead to the development of a quantum computer. For the first time, they demonstrated an ability to detect and measure both kinds of quantum errors simultaneously, as well as demonstrate a new square quantum bit circuit design that could successfully scale to larger dimensions.

Baseline caught up with Jay Gambetta, manager of the Theory Quantum Information Group at IBM, and tapped his thinking about how these developments could affect the technology and business worlds.

Baseline: What is quantum computing, and why should business and IT executives pay attention to this technology?

Jay Gambetta: Quantum computing is an alternative to classical computing, and, as the name implies, it uses quantum mechanics to compute. Unlike classical computing, which uses a zero or one and must be in one of these two states at any given moment, quantum can be in both a zero and a one state at the same time. This is known as a “superposition.”

Quantum computing uses these superpositions—as well as another concept called entanglement—to compute in an entirely different way. It uses qubits [Quantum bits] that can compute over multiple paths simultaneously. In a practical sense, a quantum computer would deliver answers at far greater speeds than today’s digital computers, including supercomputers.

Baseline: Is a quantum computer simply faster, or will it address new and different tasks?

Gambetta: It would be much faster, but there’s a difference between what you can do classically and what you can do with quantum.

Historically, quantum computers have been explored as a cryptography tool. But nature is fundamentally quantum, and these computers could possibly be used to explore chemical reactions and things in nature. This could, for example, result in new drugs. But anything that requires an understanding of quantum physics could advance through this approach.

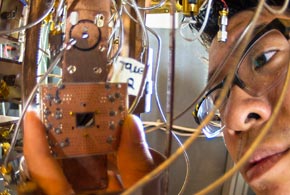

Baseline: What does the IBM qubit circuit look like?

Gambetta: It is based on a square lattice of four superconducting qubits on a chip that’s roughly one-quarter-inch square. This enables both types of quantum errors to be detected at the same time. The square-shaped design avoids problems associated with a linear array, including the detection of both kinds of quantum errors simultaneously.

The square has allowed us to go to the next stage. It allows us to detect for both flip bit and phase errors. This is all necessary for a quantum computer. We are now at the starting point for more complicated systems.

Baseline: What are the biggest remaining challenges?

Gambetta: Quantum information is very fragile. qubit technologies lose their information when interacting with matter and electromagnetic radiation.

However, we are now at the point where qubits can be designed and manufactured using standard silicon fabrication techniques. Once a handful of superconducting qubits can be manufactured reliably and repeatedly, and controlled with low error rates, there will be no fundamental obstacle to demonstrating error correction in larger lattices of qubits.

Baseline: What is the takeaway from this breakthrough, and how far away are researchers to achieving a quantum computer that could produce real-world results?

Gambetta: This experiment has helped move us from the theoretical to an ability to conduct more complicated experiments. Quantum computing appears to be about 10 to 20 years away.

We are still in the early stages, but we are beginning to see significant advancements in this space. The technology would help solve problems that we cannot solve today due to limitations in computing power.

Photo courtesy of IBM.