It was described by a former U.S. Air Force chief as the “most monumental non-nuclear explosion and fire ever seen from space,” with devastating results for a nation’s energy infrastructure – and it was set off by a programming routine in an oil pipeline management system.

An Al Qaeda plot in Iraq? No. This explosion rocked Tyumen, Siberia, in 1992, and it still reverberates with a valuable lesson for businesses operating in the post-9/11 era.

Can those entrusted with industrial information systems still afford to think of hackers or malicious software as an occasional annoyance that might tweak a company’s finances or reputation? How secure are the systems that control the valves and sensors responsible for the safe operation of a chemical plant or a nuclear reactor?

Traditionally, such systems benefited from “security through obscurity” – the use of idiosyncratic operating systems and network protocols. But today, more of them are driven by a commercial operating system such as Windows and on Internet Protocol networks.

And that’s why securing industrial information systems should be on your 2005 to-do list, says the PA Consulting Group’s Justin Lowe, an industrial process control engineer who has spent the past several years focused on the security of these systems. Instead of just assuming inaccessibility, or depending on the protection of an Internet firewall, industrial organizations also need to stamp out vulnerabilities at the control software and operating system level.

The number of reported information security incidents affecting industrial systems rose sharply after 2001, according to a study by Lowe and British Columbia Institute of Technology cybersecurity researcher Eric Byres. After dismissing some reports as “urban myth,” their study found 34 incidents worthy of analysis, with the frequency of these reports rising to 10 in 2003 from one or two per year in the 1990s. Electric, gas, and waste-water utilities, electronics manufacturers, and petroleum companies all suffered credible security breaches, they concluded. It isn’t clear whether the statistics reflect a true increase in the frequency of attacks, the spread of more vulnerable systems, or simply better reporting – but the authors figure there are probably 10 to 100 unreported incidents for every one they’ve been able to study.

In a documented instance from early 2003, the accidental introduction of the SQL Slammer worm into an Ohio nuclear power plant’s network disrupted operations – although, thankfully, no functions critical to safety. According to the Nuclear Regulatory Commission, the virus infected the system via a contractor’s private network connection into the plant; the affected system had no Internet connection. The upshot: Even when an industrial network is not connected to the Internet, or the Internet connection is rigorously protected, dial-up modems or poorly secured laptops tethered to the plant network may provide backdoor entrances.

As in business networks, the effects on industrial networks are mostly inconsequential and have a cost in lost productivity, but the authors do cite examples of real-world consequences. In March 2000, a former consultant to Australia’s Maroochy Shire Council sewerage system triggered a spill of about a quarter-million gallons of waste into a river and coastal waters in Queensland. In 2001, the culprit was sentenced to two years in prison.

An even more dramatic example, illustrating the kind of cyber attack U.S. industry might face from terrorists or hostile foreign governments, comes from our own nation’s annals of espionage. According to At the Abyss, a history of the Cold War by Reagan-era Secretary of the Air Force Thomas C. Reed, the CIA once planted a Trojan Horse programming routine–a secret function hidden within the software–in pipeline equipment it knew would be stolen by the Soviet Union. At a predetermined time in June 1992, the software malfunctioned, leading to overpressurization of the Trans Siberian natural gas pipeline, a rupture, and the aforementioned non-nuclear fireball, Reed says.

The intent, Reed explains, was to make the pipeline spring leaks, but the bug did its job a little too well. While there are no known human casualties from the explosion, which occurred in a remote area, there surely could have been. Still, Reed believes the U.S. acted ethically because “we were at war, even if it was not a declared war with A-bombs…and a lot of people get killed in war.” Also, he says, such tricks were never attempted with legitimately purchased commercial products, only with contraband equipment.

According to Reed, Reagan-era CIA chief William Casey decided that rather than arresting the smugglers, the U.S. would “help them with their shopping” by feeding the Soviets defective hardware and software. The goal was to promote Soviet distrust of their technological base, with an inability to determine which systems had been subverted. And Reed says it worked, helping Reagan convince the Soviets they could not win an arms race that included a high-tech antimissile defense program. “That was an intimate part of the Star Wars confrontation that has not been well reported,” Reed adds.

The Spy vs. Spy incident highlights the sort of tactics the U.S. and its industries must be aware could be turned inward. So while some information security experts dismiss the threat of cyberterrorism as overblown, “it’s not a bunch of hype at all,” Reed says, adding his opinion that the U.S. today is probably more vulnerable to this sort of attack than the Soviets were in the 1980s. “It’s a quarter-century later, and we’re a lot more software-dependent,” he says.

Meanwhile, garden-variety hackers are turning their attention to industrial networks, which they view as more challenging and rewarding break-in targets, says Lowe. “It could have more interesting effects, and once you’re in there it’s possibly easier because the systems are not as secured,” he adds.

With the exception of an insider, such as a former employee, a hacker gaining access to an industrial network might not be able to target the precise control system to open a valve to cause a chemical spill. But such knowledge wouldn’t necessarily be required “to cause some serious havoc,” Lowe says. Think of the Northeastern blackout of 2003. Even though it’s now believed to have been triggered by a tree falling across a power line in Ohio, it was exacerbated by the faulty design of the utility companies’ control software. Several security researchers mentioned the blackout as an example of the chaotic ripple effects possible in a networked system that could be triggered by an information security breakdown.

Some speculate that an infestation of Slammer, a network worm that replicates itself between SQL Servers and generates bogus network traffic, may have exacerbated the utilities’ response to the blackout, says Motorola Chief Information Security Officer William C. Boni. Simply clogging a network enough to interfere with delivery of important alarms could exacerbate a crisis, he says. “It doesn’t have to be something catastrophic like a terrorist attack–but it could be,” Boni says. Information security and physical security professionals need to collaborate more closely to guard against “blended threats,” in which a cyberattack might be used to amplify a physical attack, he says.

Byres and Lowe classified Reed’s Soviet cyberwarfare story in their study as a “likely but unconfirmed” example of real-world damage from a cyberattack. And while Western companies may not be in the business of stealing technology from abroad, Byres notes that much control software going into industrial equipment is written offshore in third-world nations, potentially by hostile programmers. So it would not be all that surprising, he says, if some of that software might contain covert functions designed to cause a chemical spill, an electrical outage, an explosion or other industrial accident.

“I’d think that’s a distinct possibility, not at all unreasonable,” Reed agrees. His message for corporate technology managers: “When you get software from somewhere, you’d better know where they’re getting it from, and they’d better have integrity.”

Unfortunately, no corporate information security manager can afford to audit every piece of factory control software on the chance that some hypothetical foreign evildoer worked on it as a programmer. It’s up to the defense, intelligence, and homeland security agencies to warn against some threats if they become real, says John Dubiel, a Gartner Inc. consultant who counsels electric utilities on information security strategy. “If it’s a government that’s after you, and you’re a utility company, there’s not much you can do.”

Short of that extreme, however, Dubiel says electric utilities are a good example of an industry that’s taking information security threats seriously. For example, the North American Electric Reliability Council has drafted minimum information security standards and has the power to issue fines to utilities that fall short.

Nonetheless, practical details of upgrading industrial control systems can be very difficult. While an electrical control system might be running a version of Windows or Unix, it’s often a version that’s been specially tailored to a particular application. “Often vendors don’t let you upgrade your operating systems,” Dubiel says. “There are a lot of applications running on [Windows] NT 4.0 servers or 3.5 and are not certified to run on the next release up. You have applications that can’t be patched, so in a lot of cases it’s a replacement effort.”

Until recently, vendors of industrial software never felt the need to provide much more than basic password security, Dubiel says. But it’s clearly no longer enough to rely simply on firewalls and cutting off industrial networks from the Internet. Applications themselves need to be hardened against attack and regularly patched as vulnerabilities emerge, under the assumption that no network is 100% secure.

To do otherwise is like taking comfort in the chain-link fence around your factory – so much so that you never bother to lock the doors or screen the employees who will be entrusted with your most sensitive operations. Like that fence, the perimeter security on your network may be good – but that doesn’t mean it’s sufficient.

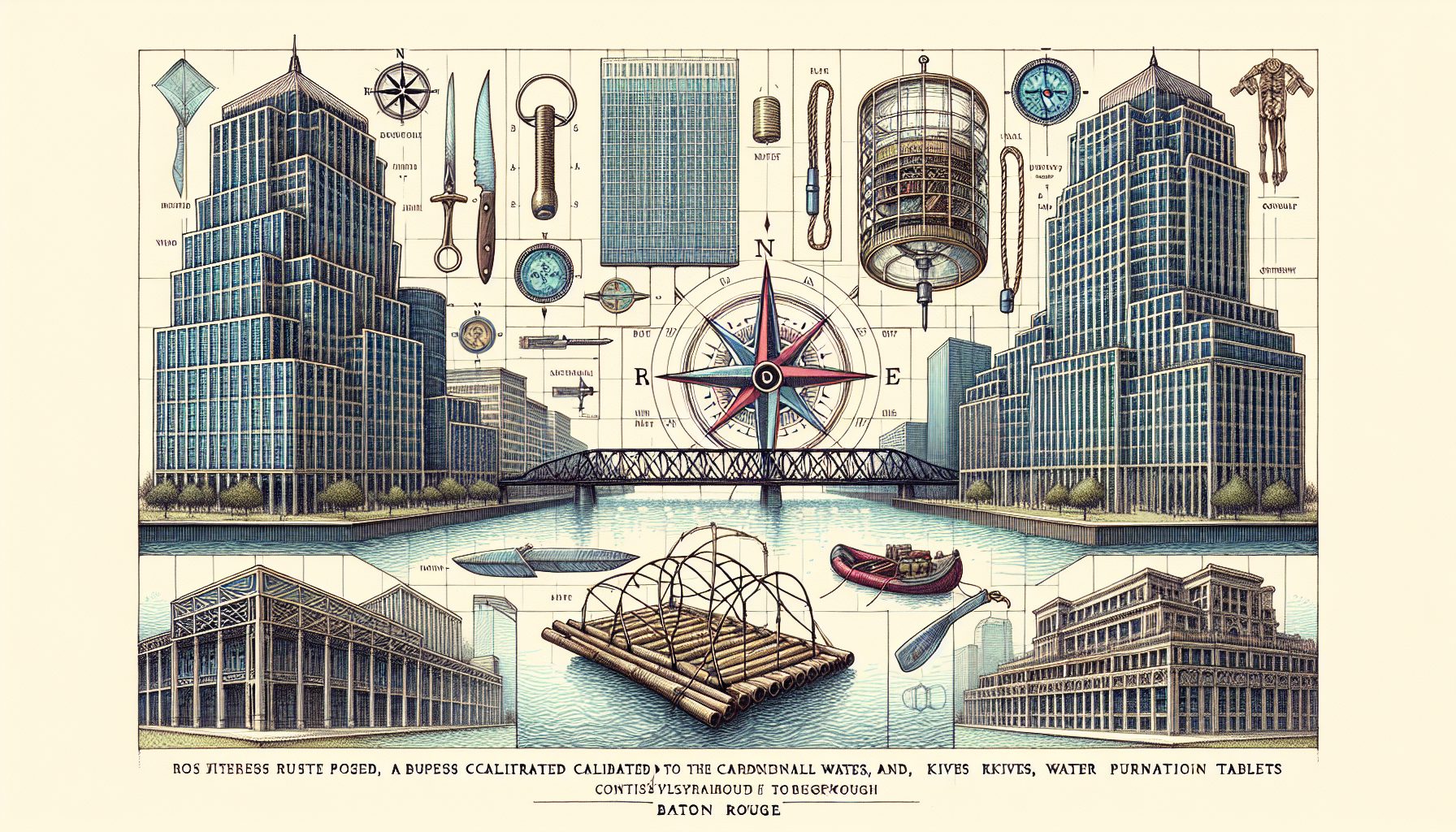

7 Questions To Assess Your Industrial Security

1. Do you have a safety-critical process controlled by a computer-based system?

2. Is the system connected to the corporate network or other networks (or to dial-up modems)?

3. Is this system based on a common operating system, such as Windows or Unix?

4. Is there relatively free physical access to the control systems?

If so, ask yourself:

5. Is the system fully protected by firewall, antivirus software and regular security patches?

6. Do you fully understand the real-life impact viruses, worms, and hackers could have?

7. Are you confident that your vendor has properly assessed security and implemented appropriate security measures?

Source: Justin Lowe, PA Consulting Group