At exactly 1:47 p.m. on July 24, miles kelly gotthe call every CIO and data center manager dreads: The datacenter had experienced a power outage. Indeed, a power surgehad shut off energy to the company’s primary data center in SanFrancisco, and four of the building’s 10 backup generators hadfailed to start. Three computer rooms were down.

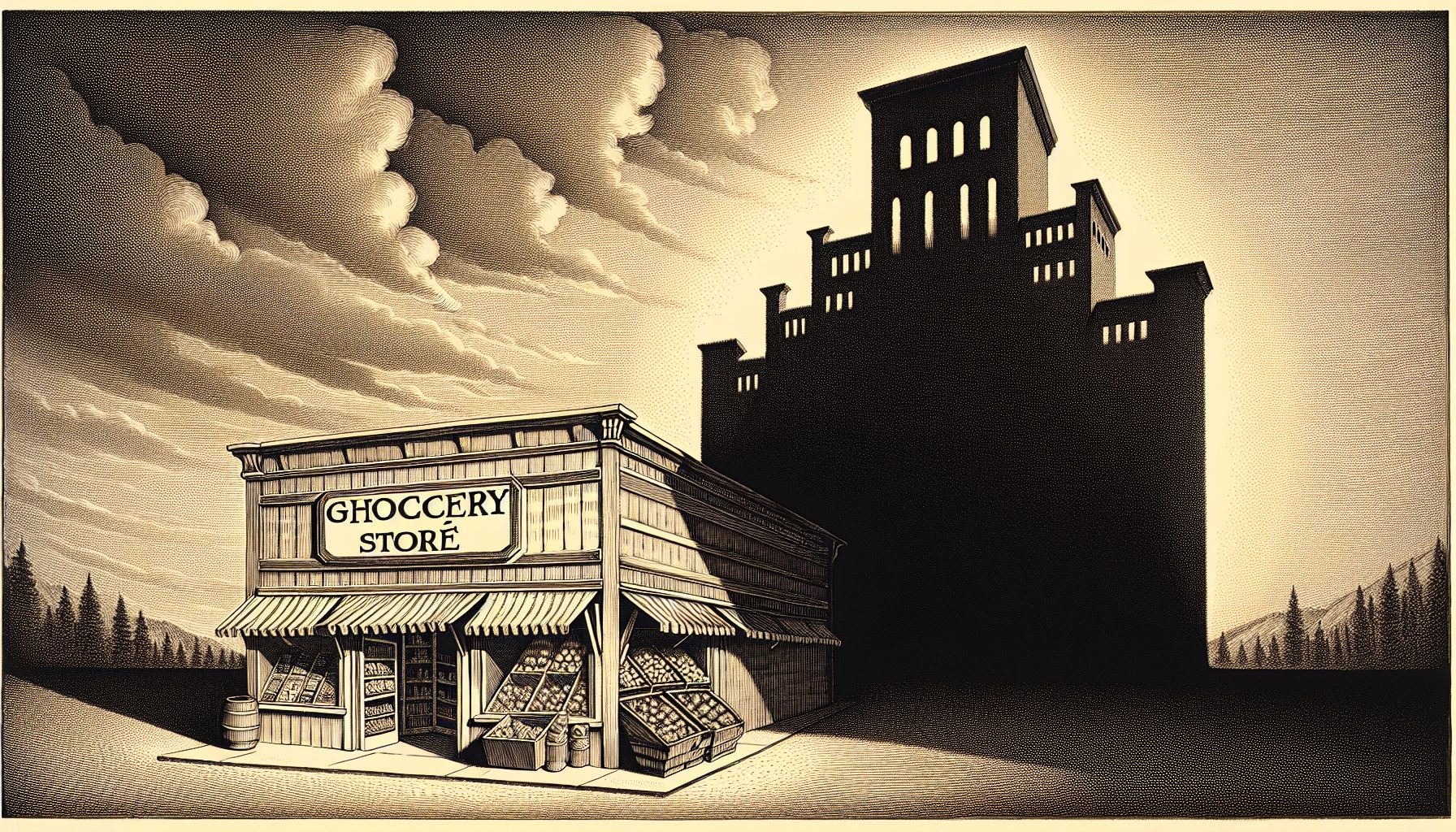

That would signal the start of a bad day for any enterprise,but for 365 Main, where Kelly serves as vice president of marketingand strategy, the problem was magnified many timesover. That’s because 365 Main isn’t just any business: It’s oneof the nation’s top data center managers, or co-location serviceproviders. There are more than 75,000 servers in its 227,000-square-foot San Francisco facility, supporting hundreds of customers,including such high-profile companies as Craigslist, SunMicrosystems, Six Apart and the Oakland Raiders.

“When the failure of a data center becomes a bigger issueis when you have all these Web services and start-ups that havetheir data center services only at this one site,” says James Staten,principal analyst in the infrastructure and operations group atForrester Research.

When the four, 2.1-megawatt diesel engine-generator unitsfailed to kick in as they should have, it was a disaster in themaking for 365 Main. The company promotes itself as having”The World’s Finest Data Centers,” and clients rely on it forconstant uptime. Prior to the incident, 365 Main could claim100% uptime.

But on the afternoon of July 24, 40% to 45% of 365 Main’scustomers lost power to their equipment for about 45 minutes,Kelly says. At Sun Microsystems, sites were down 45 minutes to threehours, with most being restored in about 90 minutes, accordingto Will Snow, senior director of Web platforms at Sun. Althoughthe power was out for 45 minutes, it can take a few hours tobring systems and networks back up and ensure they’re workingproperly. Snow says Sun had a backup plan for services at 365 Main,but it wasn’t complete. “We’re in the process of changing ourdisaster recovery plans to deal with shorter outages,” Snow says.”Originally our plans were tailored for more significant outagesof four-plus hours, but now we’re looking to respond to veryshort outages such as the San Francisco outage.” At Six Apart, four of the company’s Web sites—LiveJournal,Vox, TypePad and Movable Type—were down 90 minutes. OnLiveJournal, the company posted an apology, explaining thatduring outages it would normally display a message telling visitorsabout the status of the site. “But because this was a fullpower outage there was a period of time where we could notaccess or update a status page,” the posting explained. Fortunately, 365 Main was able to manually restart the generatorsthat failed to kick in automatically, which allowed it tooperate on backup power until PG&E began delivering a stablepower supply.