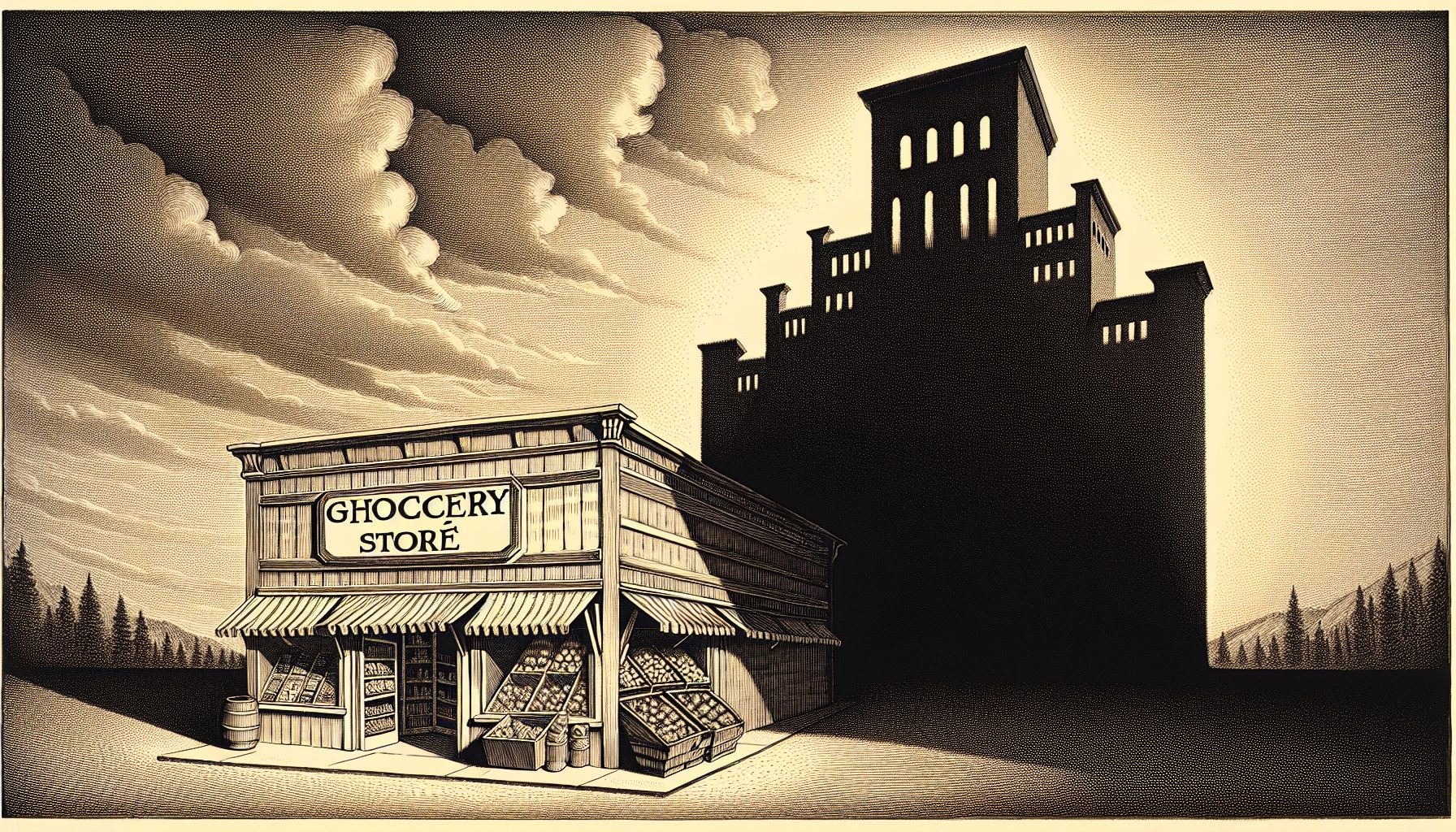

By 2024, federal government agencies will spend as much as $16.5 billion storing redundant copies of nonproduction data, according to a new report from MeriTalk, a public-private partnership focused on improving the outcomes of government IT efforts. That goes directly against the Federal Data Center Consolidation Initiative (FDCCI), says the report, which was underwritten by Actifio, a provider of copy data virtualization products.

While agencies have made it a priority to consolidate and transition to more efficient and agile cloud-based systems, 72 percent of the 150 IT managers surveyed in May 2014 said their agency has maintained or increased its number of data centers since FDCCI launched in 2010. A mere 6 percent of the managers graded their agency an “A” for consolidation efforts to meet FDCCI’s 2015 deadline.

The report, “Consolidation Aggravation: Tip of the Data Management Iceberg,” cites several main barriers to consolidation, such as overall resistance, data management challenges and data growth. These factors are preventing data center optimization and driving copy data growth, which results in higher storage costs.

The study notes that agencies don’t necessarily have too many servers, but they do have too many systems that are creating redundant copies of data for multiple purposes. On average, more than a quarter of the agencies surveyed use 50 to 88 percent of their data storage to house copies or non-primary data.

Last year, 27 percent of the average agency’s storage budget went toward non-primary data. Agencies expect that to grow to 31 percent this year, which MeriTalk says translates into a $2.7 billion cost in 2014, $3.1 billion in 2015 and as much as $16.5 billion over the next 10 years.

A growing number of applications and multiple data owners are driving growth in the number of data copies, the report says, and one-third of the agencies said they don’t vary the number of copies based on the original document’s significance or the likelihood that it will be used again.

“We’ve seen the dramatic impact of a more holistic approach to copy data management in the private sector for years,” says Ash Ashutosh, founder and CEO of Actifio. “Frankly I’m not surprised by the magnitude of the potential savings at the federal level, or that this has now come to light as a significant barrier to FDCCI.”

The majority of survey respondents think better management of copy data will help their agency’s consolidation efforts under FDCCI succeed. However, only 9 percent of these agencies have deployed projects to better manage storage and data growth. As they approach the FDCCI deadline, agencies need to shift the discussion of FDCCI from server virtualization to enhanced data management and virtualization, the report states.

“Data and application sprawl are the enemies of government IT efficiency,” says Steve O’Keeffe, founder of MeriTalk. “We need leadership to empower federal IT innovators to change the failing equation. We need a cultural and acquisition shift to enable new models and the shared services that will unlock new efficiencies and real savings.”